- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Survey123

- :

- ArcGIS Survey123 Questions

- :

- Re: Generate Feature Report in Power Automate dire...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Generate Feature Report in Power Automate directly from Feature Layer

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

EDIT: Just going to link back to my main Power Automate post to keep things connected.

There are different ways to do this, but I thought that I would showcase the one I am going to be using relatively soon.

The idea is that the S123 webhook that listens for submissions isn't 100% reliable, and a missed submission can be detrimental to a project. However, even when a submission skips the webhook, it still lands in Portal. The goal of this workflow is to watch the Feature Layer directly, and run processes off of that.

General Steps

1.

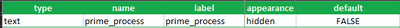

I started by adding a single field to a survey. This will be your "control field". You can name this field whatever you want, and the values within it can be whatever makes sense to you. My "control field" will be a TRUE/FALSE:

Now I can tell which items haven't been processed yet.

2.

In Power Automate, add a trigger. For my tests, I used a Manual Trigger, but once in production, it will be on a Schedule.

3.

Add a new step. Search for "Esri" and click on your proper environment (Enterprise = Purple and AGO = Orange).

More details on these connectors is here.

4.

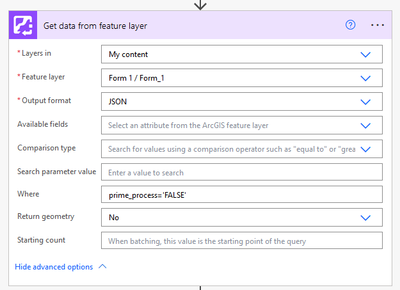

Select "Get data from feature layer". Configure the three required steps by pointing to the survey you are building for, and choose JSON as the output.

The only other important item here is the Where clause (e.g., prime_process = 'FALSE'). Without that, you won't process the proper item(s). I am using my newly created "control field" referenced in Step 1.

"Return geometry" is optional and you can probably leave that as "Yes".

More details on connector limitations is here (e.g., Return Geometry).

5.

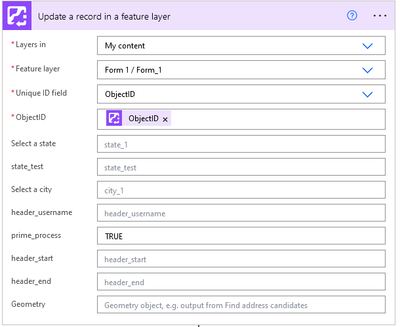

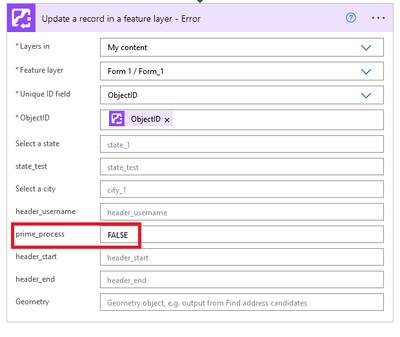

Add "Update a record in a feature layer". The first two fields are again the survey you are building for.

The third field should be "ObjectID". Then, in the fourth field, select your ObjectID from dynamic content. This will automatically nest your step in an "Apply to each"... That makes sense, because even if there is only one item in your Feature Layer, the operation is an array.

Lastly, find your "control field" and toggle it to indicate the item was processed. (e.g., prime_process = TRUE)

6.

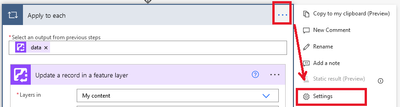

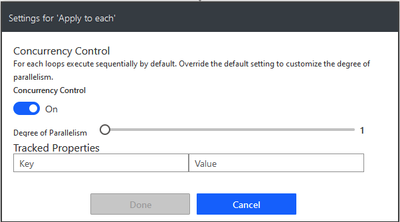

Before continuing, take a step back and select the Settings for your new "Apply to each".

Then turn on "Concurrency Control" and set it to "1"

This ensure that we don't double-process an item by only handling one at a time.

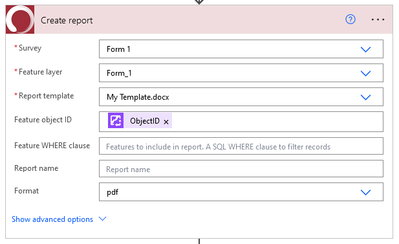

7.

Add "Create report", point it to the same survey you are building for, and configure as necessary.

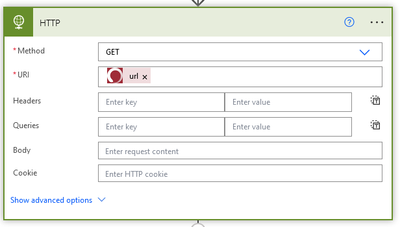

8.

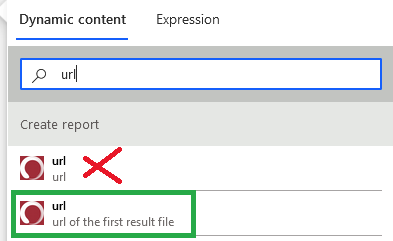

Add "HTTP". There are only two things to set here:

When adding the "url" from dynamic content, be sure you grab the "url of the first result file", as indicated below.

9.

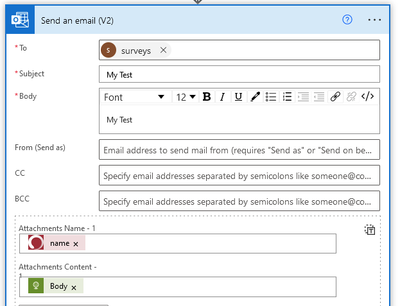

Add "Send an email" (or whatever steps you want really. This is just an example).

The only really important thing I wanted to showcase here is the attachment. For the name, there will be two options, just like "url". Be sure to choose "name of the first result file". For the Attachment Content, use your HTTP's body.

10.

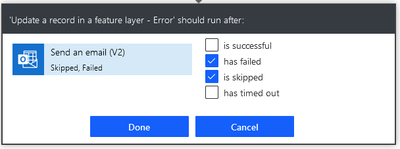

And lastly, add a second "Update a record in a feature layer".

Configure it the same as the first, but set the "control field" back to FALSE. This is our (super basic) error handler.

Then, click on the ellipses (...) and select "Configure run after" and choose "has failed" and "is skipped". Then, hit "Done".

...

In the end, I got this:

Very simplified flow. But it's at least a starting point. I'll be building out my process form here to add in a bunch more steps and redundancy.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much for putting these guides together @abureaux !

I'm going to have to update all of my When a survey response is submitted triggers for sure, I haven't prioritized it yet because the webhooks that create reports, the user will see it hasn't been created and just submit it again, a nuisance but manageable.

I know it's the basic first pass, but a suggestion I might include is putting the actions Update record (1), Create report, HTTP, Send Email in a Scope, and then having the error catching Update record (2) set as a run after fail/skip on the Scope, just in case an earlier action than the email fails.

And I like the HTTP GET as an alternative to the OneDrive actions in other samples as well (and that custom connector look will always throw me off, I'm so used to the green AGOL one! Haha)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oh, I totally agree! I thought to myself... these should really be in a scope. But I was lazy and convinced myself this was just a "proof of concept". (The "Skipped" part of Run After kind of works in this case, but it isn't a good practice).

Good point on the connector. The custom red was me having fun with soft corp branding, not expecting anyone else to ever see it. I may make a new Custom Connector purely to grab a screen cap of so I can MS Paint that standard green connector in for the future.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Haha fair enough! I had a feeling you'd have thought about it but went basic for the proof of concept. I didn't realize the Skipped would trigger in that case, I thought a failure would just end things.

Ah I just figured it was the default custom connector and I didn't recognize it because of that, I didn't know you could personalize them, that's pretty cool actually! It's just a small detail in these guides though.