- Home

- :

- All Communities

- :

- Products

- :

- Data Management

- :

- Geodatabase Questions

- :

- Re: High Precision Date Field Issue when Registeri...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

High Precision Date Field Issue when Registering Tables

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've encountered an issue with registering tables now that the new "high precision" datetime field is available in 3.2/11.2, where when testing an identical, empty table and field, occasionally ArcGIS Pro registers it as a regular "low precision" datetime field, and the rest of the time Pro considers it a "high precision" datetime field. This is using MS SQL Server (DATETIME type, not DATETIME2(7)). We do not currently wish to employ the "high precision" datetime type.

When creating a table within ArcGIS Pro 3.2, we can create a datetime field and then migrate that field to high precision using the Esri tool afterwards. That's not an issue.

However, when we register pre-existing tables created through SSMS using the Register with Geodatabase tool, we encounter some weird behavior. We have done several tests with multiple databases and tables, and sometimes Pro registers the table as a regular datetime field, and sometimes it registers it was a "high precision" datetime field.

We performed these tests on the exact same tables in multiple databases, and it is using the regular datetime field type in SQL Server. And sometimes Pro registers it as "high precision", and other times it does not.

This is very problematic for us because there is no way (to my knowledge) to "downgrade" to a non-precision datetime field in Esri, and it is only compatible with ArcGIS Enterprise 11.2, meaning we can't publish feature services containing "high precision" datetime fields to lower Enterprise versions.

Is there anyway we can "force" ArcGIS Pro to recognize a field as non-high precision when registering a table with the geodatabase? Or is this a bug or something, because we need Pro to be consistent when registering tables with the geodatabase and not just randomly picking and choosing to use "high precision" or not.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

After a bit of back and forth, this behavior is "expected"; therefore the previous BUG was closed and has been replaced with this ENH request. If you're interested in seeing this resolved feel free to add your organization to the enhancement request.

ENH-000163896

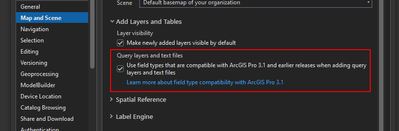

When they create a feature class, they create a date time field in standard precision. Using an automated python workflow, they aim to create a database view from the feature class and register it with the geodatabase. However, when the date time field is created in the database view, it is created in high precision by default, and they are unable to change it to standard precision. Even after changing ArcGIS Pro option to opt out of using new field types for query layers and text files (check the Use field types that are compatible with ArcGIS Pro 3.1 and earlier releases when adding query layers and text files option under Map and Scenes option) the same behavior is reproduced. They aim to create the fields in standard precision since, having high precision fields in database view breaks their workflow.

In ArcGIS Pro 3.2 and later, new field types to support date, time, and big integer values are available. When query layers on unregistered datasets or text files are added to a map in ArcGIS Pro 3.2, these fields may be assigned to the new field types, which are unavailable in earlier releases. By design, when a query layer or database view is created in ArcGIS Pro, it is created in high precision. It is an expected behavior in ArcGIS Pro 3.2 and higher in which high precision fields are created. However, in scenarios where users need to work with standard precision, currently there is no way of attaining this. Hence, adding a functionality in the Register with Geodatabase GP Tool to format date time fields in standard precision will let customers achieve this.

Documentation Url :

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for submitting that, I appreciate it. As I anticipated, it was not a bug because technically, it is working as designed. It's just a terrible implementation of these new field types by Esri.

This issue, caused by Esri, has totally wrecked our Python workflows. How Esri can release new functionality without specifying a "non-high accuracy option" within the Register with Geodatabase GP Tool is beyond me.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi everybody,

I just wanted to point out that I had to seek for a workaround and it wasn't enough setting the Use field types that are compatible with ArcGIS PRO 3.1 and earlier releases when adding query layers and text files option:

Make sure that you cast your data to avoid high precision field types when creating the query layer. Example with a date type:

select objectid, CAST(data_modif AS TIMESTAMP(0)), shape from devdb.sde.centros_mp where objectid > 10

This way (tested in 3.2.2) I managed to publish without 00396 Error.

Regards,

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »